If you’ve ever wondered why some AI models recognize objects flawlessly while others fail at basic visual tasks, the answer often comes down to one thing: image annotation quality. Behind every reliable computer vision system is a carefully executed image annotation service that translates raw images into meaningful training data. This guide is written to help you understand not just what image annotation is, but why it matters, how it’s done in practice, and what separates average results from production-ready AI.

By the end of this article, you’ll have a clear, practical understanding of image annotation techniques, common pitfalls, tooling decisions, and best practices that actually hold up in real-world AI projects.

Image annotation is the process of labeling visual data so machines can interpret and learn from it. At its core, image annotation techniques transform unstructured images into structured datasets that algorithms can understand. This step is foundational for training, validating, and improving computer vision models.

What’s often overlooked is that annotation is not a mechanical task. It is a design decision. The way data is labeled determines what the model learns, what it ignores, and how it behaves in edge cases. Poor annotation leads to biased, brittle, or inaccurate models. High-quality annotation enables systems that scale reliably in production. In practice, image annotation impacts industries such as healthcare diagnostics, autonomous vehicles, retail analytics, security systems, and industrial automation. In each case, the cost of annotation errors is not theoretical; it shows as missed detections, false positives, or unsafe decisions.

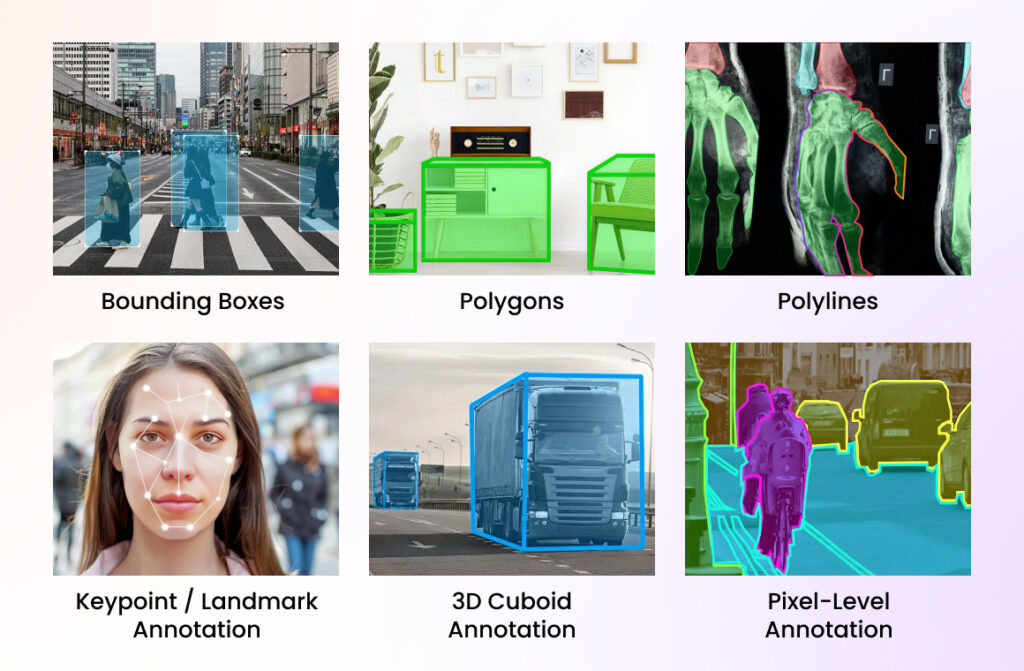

Image annotation uses various methods to mark visual data depending on the complexity and goals of the project.

Some methods utilized include:

Bounding boxes are rectangular labels drawn around objects of interest in an image. They are widely used in object detection tasks where approximate object location is sufficient. This method is fast, cost-effective, and ideal for applications like product detection, vehicle detection, and people counting.

Polygon annotation outlines objects using multiple connected points to capture their exact shape. It is more precise than bounding boxes and is commonly used when object boundaries matter, such as in medical imaging, satellite imagery, and manufacturing quality inspection

Polylines are used to annotate linear structures such as roads, lanes, power lines, or pipelines. Instead of enclosing an area, they trace a path or direction, making them ideal for mapping, navigation, and infrastructure analysis.

Keypoint annotation identifies specific points on an object, such as facial landmarks, human joints, or object corners. This type is essential for pose estimation, facial recognition, gesture detection, and motion tracking applications.

3D cuboids label objects in three-dimensional space by capturing height, width, and depth. This technique is critical for autonomous driving and robotics, where understanding spatial relationships and object orientation is required.

Pixel-level annotation assigns a label to every individual pixel in an image. It provides the highest level of detail and is used in semantic and instance segmentation tasks where precise region understanding is necessary, such as medical diagnostics and scene segmentation.

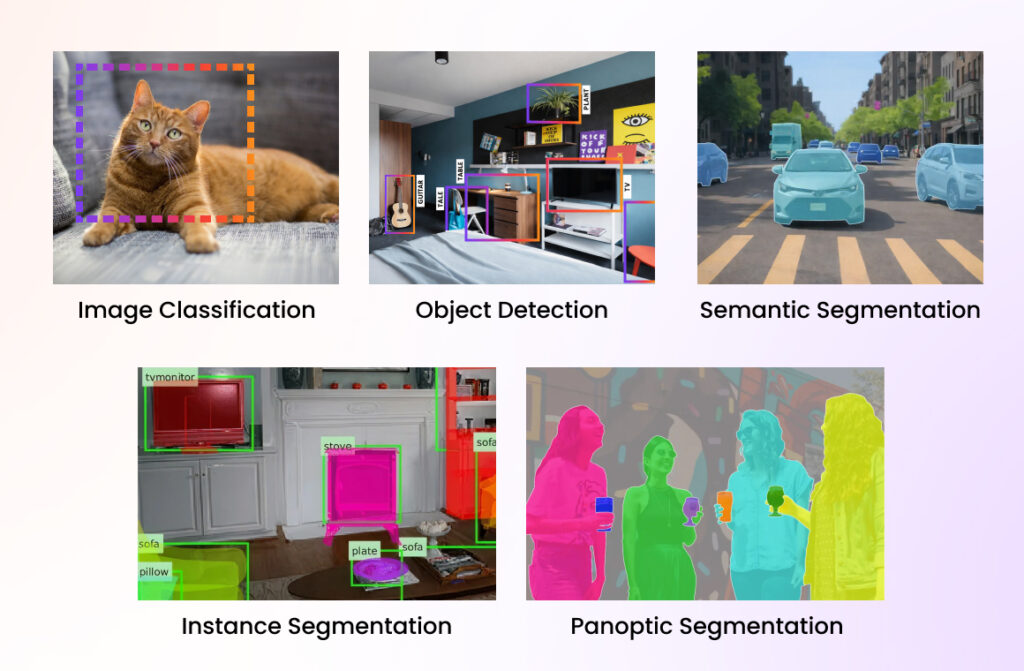

Different AI use cases require different annotation strategies. Choosing the right image annotation techniques is less about preference and more about the problem you’re solving.

Image classification assigns a single label to an entire image based on its overall content. The model learns to recognize patterns, colors, and shapes to determine which category an image belongs to. This technique does not identify object location, only what is present in the image. It is best suited for use cases where object position is not important. Image classification is often the starting point for simpler AI applications. It requires less annotation effort compared to other techniques.

Example:

Classifying medical images as noraml or abnormal in a diagnostic screening system.

Object detection identifies and locates multiple objects within an image using bounding boxes. Unlike image classification, it answers both what the objects are and where they are located. This technique is widely used when object position, count, or interaction matters. It balances accuracy and annotation effort, making it popular for many real-world applications. Object detection models can work in real time.

Example:

Detecting vehicles, pedestrians, and traffic signs in autonomous driving systems.

Semantic segmentation labels every pixel in an image with a class, allowing the model to understand different regions. All objects of the same class are treated as one group, without distinguishing individual instances. This technique provides a deeper understanding of image context and structure. It is especially useful when area coverage matters more than object count. Annotation is more detailed and time-intensive.

Example:

Segmenting roads, sidewalks, buildings, and vegetation in satellite imagery.

Instance segmentation combines object detection and semantic segmentation. It identifies each object separately while also labeling every pixel belonging to that object. This allows the model to distinguish between multiple objects of the same class. It is more complex and computationally expensive but delivers higher precision. This technique is essential for crowded or overlapping objects.

Example:

Separating individual people in a crowded public space for retail analytics.

Panoptic segmentation unifies semantic and instance segmentation into a single framework. It labels every pixel in the image while also distinguishing individual object instances where applicable. This technique provides a complete scene understanding, combining background context and object-level details. It is commonly used in advanced computer vision systems. Panoptic segmentation requires high-quality annotation and strong computational resources.

Example:

Understanding full street scenes by identifying roads, buildings, vehicles, and individual pedestrians simultaneously.

Here are the clear, industry-standard steps in Image Annotation, written from a real-world, production perspective.

Start by clearly defining what the model needs to learn. Identify the use case, target outputs, and success criteria so annotation aligns with the final AI objective.

Gather representative image data covering real-world variations. Clean the dataset by removing duplicates, low-quality images, or irrelevant samples before annotation begins.

Select appropriate image annotation techniques such as bounding boxes, polygons, segmentation, or keypoints based on the problem you’re solving and the model architecture.

Document precise labeling rules to ensure consistency across annotators. Clear guidelines reduce ambiguity and prevent data noise during scaling.

Perform manual or assisted image labeling using annotation tools. Annotators apply labels strictly according to guidelines, focusing on accuracy over speed.

Conduct quality checks through peer reviews, sampling, or inter-annotator agreement analysis to catch inconsistencies and errors early.

Refine annotations based on feedback, model performance, and edge cases discovered during testing. Annotation is an iterative process, not a one-time task.

Export annotated data in the required format and integrate it into the model training pipeline. Monitor model behavior to validate annotation effectiveness.

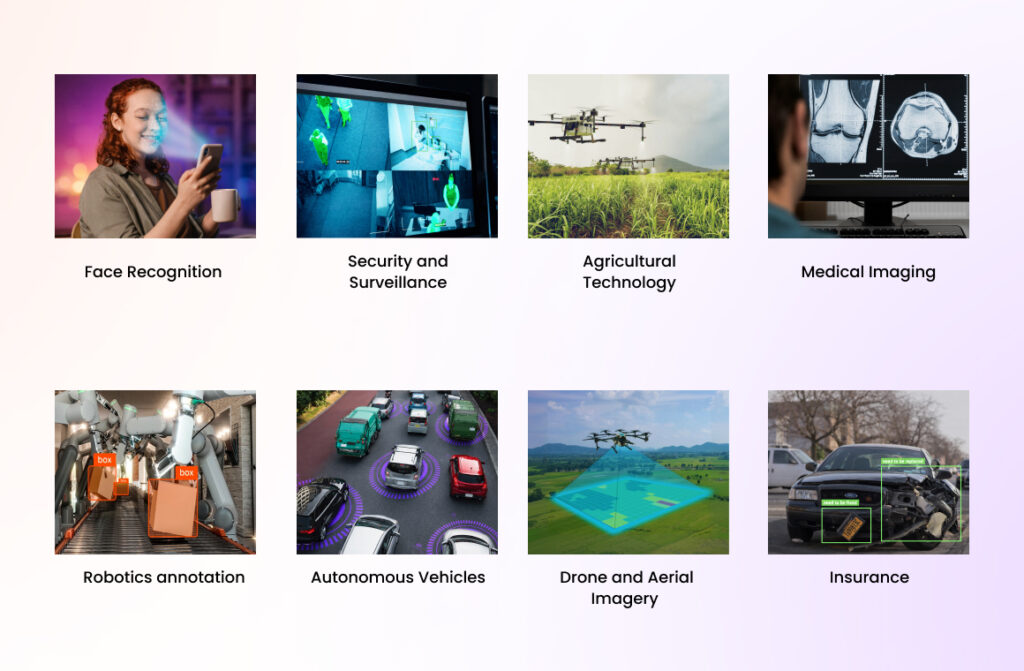

Image annotation plays a foundational role in training computer vision systems across industries. By accurately labeling visual data, it enables AI models to understand visual patterns, interpret complex scenes, and make reliable decisions in real-world environments.

Image annotation plays a critical role in training face recognition systems by labeling facial features, landmarks, and identities. Accurate annotations help models detect faces under varying lighting, angles, and expressions. This improves recognition accuracy while reducing false positives. Properly annotated datasets also help address bias and fairness concerns. Face recognition is widely used in authentication, access control, and identity verification systems.

In security and surveillance, image annotation enables systems to detect people, objects, and unusual activities. Annotated data helps models identify threats, track movement, and analyze behavior patterns. These systems rely on consistent labeling to work in real-time environments. High-quality annotation reduces false alarms and improves situational awareness. It is essential for smart cities and public safety applications.

Image annotation is used in AgriTech to identify crops, weeds, pests, and soil conditions. Annotated images help AI models monitor crop health and predict yields. This enables precision farming and optimized resource usage. Accurate labeling improves early detection of diseases and stress factors. AgriTech solutions rely on annotation to make data-driven farming decisions.

Medical imaging depends heavily on precise image annotation for detecting abnormalities, organs, and disease patterns. Annotated scans help train models used in diagnostics, treatment planning, and research. Accuracy is critical, as small errors can impact clinical outcomes. Annotation often requires domain expertise and strict quality control. It supports applications in radiology, pathology, and medical research.

In robotics, image annotation helps machines understand their surroundings and interact safely with objects. Annotated data trains robots to recognize obstacles, tools, and human actions. This improves navigation, object manipulation, and decision-making. High-quality annotations are essential for real-time robotic systems. Robotics applications span manufacturing, warehousing, and service automation.

Autonomous vehicles rely on image annotation to recognize roads, pedestrians, vehicles, traffic signs, and lane markings. Annotated datasets enable accurate perception and decision-making in complex driving environments. Precision and consistency are vital for safety-critical systems. Image annotation supports object detection, segmentation, and 3D perception. It is a foundational component of self-driving technology.

Drone and aerial imagery annotation helps analyze large-scale environments from above. Labeled images are used for land mapping, infrastructure monitoring, and environmental analysis. Annotation enables detection of buildings, roads, vegetation, and anomalies. This improves planning, inspection, and disaster response efforts. It is widely used in construction, energy, and urban planning.

In the insurance industry, image annotation supports damage assessment and claim automation. Annotated images help AI models evaluate vehicle damage, property loss, and risk factors. This speeds up claim processing and reduces manual inspection costs. Accurate annotation improves consistency and fraud detection. Insurance companies use these systems to enhance customer experience and operational efficiency.

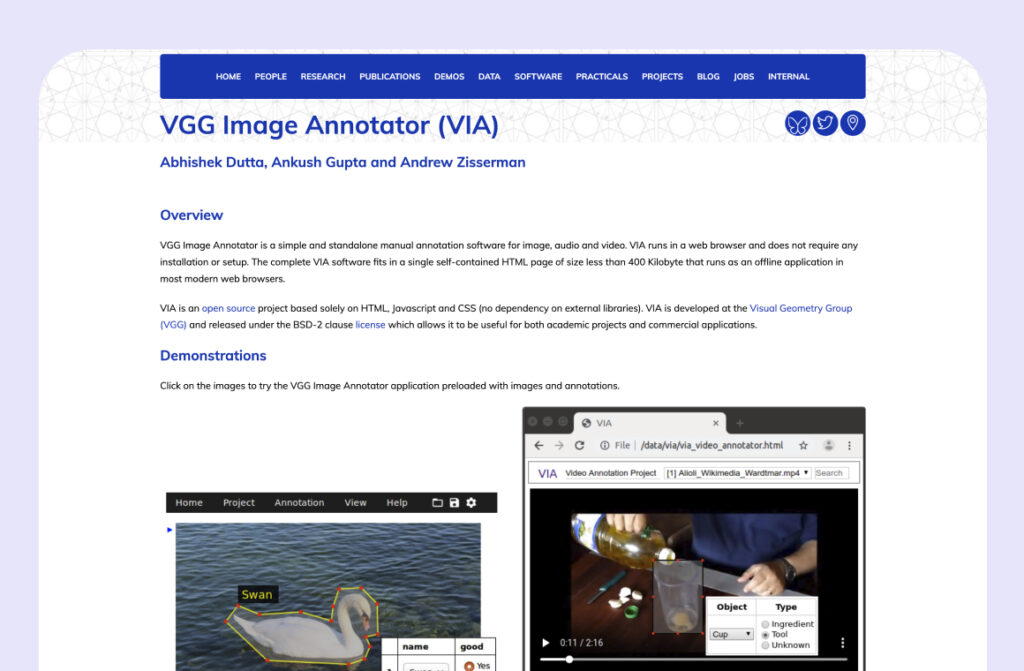

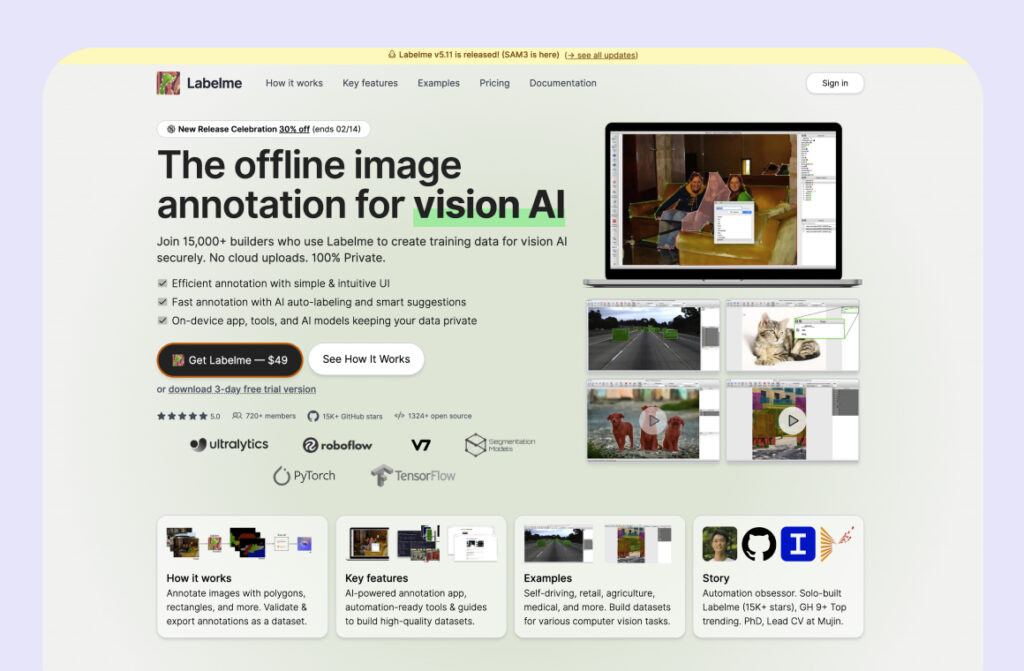

Take a look on image annotation tools and software in 2026.

The total number of images to be annotated directly impacts project cost. Larger datasets benefit from volume efficiencies but still require careful planning for quality control. High-volume projects often need scalable teams and automated workflows, which influence pricing models.

Different annotation types vary significantly in effort and cost. Simple tasks like image classification are less expensive than complex methods such as polygon annotation or pixel-level segmentation. The more detailed the annotation, the higher the time and expertise required.

Images with cluttered backgrounds, overlapping objects, poor lighting, or varying perspectives take longer to annotate. Complex scenes increase cognitive load for annotators and require stricter review processes. This complexity directly affects turnaround time and pricing.

Projects with strict accuracy thresholds demand multiple review cycles and senior annotators. Higher quality standards increase costs but are essential for production-grade AI models. Quality assurance is often a major cost driver in annotation projects.

Tight deadlines require larger annotation teams, parallel workflows, or extended working hours. Accelerated delivery increases operational costs and is reflected in pricing. Urgent projects often prioritize speed without compromising accuracy.

Using specialized tools or integrating annotations into custom AI pipelines adds technical overhead. Custom formats, APIs, or workflow integrations require additional setup and maintenance. These technical requirements influence overall project pricing.

Image annotation is a foundational component of modern AI and machine learning systems, especially in computer vision applications. As image annotation services and image labeling scale across industries, it becomes essential to ensure that annotation practices comply with regulations and uphold ethical standards. Responsible image annotation protects individual rights, reduces bias, and ensures AI systems behave fairly in real-world environments. This section focuses on three critical areas: data privacy and GDPR compliance, bias and fairness in image annotation, and ethical governance.

The General Data Protection Regulation (GDPR) significantly impacts how image annotation services handle visual data that may include identifiable individuals. Since images can contain personal or sensitive information, organizations must treat annotated datasets as regulated data assets. GDPR compliance is mandatory when image labeling involves EU citizens or data processed within the EU.

Key GDPR considerations in image annotation include:

a. Consent

Organizations must obtain explicit and informed consent before using images for annotation. Consent documentation should clearly state the purpose of image labeling, how the data will be used, and allow individuals to withdraw consent at any time.

b. Anonymization

Personally identifiable information such as faces, license plates, or unique features should be anonymized or masked during image annotation whenever possible. Proper anonymization reduces privacy risks while preserving dataset usability.

c. Data Security

Secure storage, controlled access, and encryption are essential to protect annotated images from unauthorized access or misuse. Data security is a core requirement for any professional image annotation service.

d. Data Retention

Annotated image datasets should only be retained for as long as they serve a legitimate purpose. GDPR grants individuals the right to request deletion, making lifecycle management a key part of responsible image labeling.

Bias introduced during image annotation can propagate into AI models, leading to inaccurate or discriminatory outcomes. Addressing bias is a critical responsibility in image annotation techniques used for training AI systems.

Key considerations for fairness include:

a. Diversity and Representation

Annotation teams and datasets must reflect real-world diversity. Inclusive representation helps reduce systemic bias in labeled image data and improves model reliability.

b. Bias Detection and Review

Regular audits and review mechanisms should be implemented to identify bias patterns in annotated datasets. Continuous evaluation helps maintain fairness throughout the image annotation lifecycle.

c. Guidelines and Training

Clear annotation guidelines and ethical training help annotators avoid stereotypes and subjective labeling. Consistent rules improve both data quality and fairness.

d. Fairness Metrics

Organizations should define measurable fairness indicators and monitor how annotated data influences model behavior. Transparency in reporting bias-related findings is essential for trust.

Beyond legal compliance, ethical responsibility plays a vital role in image annotation for AI/ML systems. Ethical guidelines ensure that image labeling practices respect human dignity and societal values.

Key ethical principles include:

a. Respect for Human Rights

Image annotation projects must safeguard individual privacy and avoid misuse of sensitive visual data. Ethical annotation respects personal identity and contextual integrity.

b. Ethical Review Processes

Establishing an ethical review framework allows organizations to assess the societal impact of annotation projects before deployment. Expert oversight helps prevent unintended harm.

c. Transparency

Clear communication about why images are annotated and how they will be used builds trust. Transparency is a cornerstone of responsible image annotation services.

d. Accountability

Organizations should assign clear ownership for ethical compliance. Accountability ensures that ethical standards are enforced throughout the image labeling workflow.

Image annotation is a critical enabler of intelligent AI systems, but its impact extends beyond technical performance. Responsible image annotation services must prioritize GDPR compliance, fairness, and ethical integrity to ensure safe and trustworthy AI deployment. By embedding these principles into annotation workflows, organizations can build AI solutions that are not only accurate but also socially responsible and sustainable.

Image annotation continues to evolve as AI systems become more advanced and data-intensive. Emerging trends are reshaping how image annotation services operate, focusing on speed, accuracy, and scalability. These trends reflect the growing need for smarter, more adaptive image labeling approaches in real-world AI deployments.

AI-assisted annotation is becoming a standard practice in modern image annotation workflows. Machine learning models are used to pre-label images, which human annotators then review and refine. This human-in-the-loop approach significantly reduces annotation time while maintaining accuracy. AI integration also helps standardize labeling across large datasets. As models improve, AI-driven annotation will continue to accelerate dataset creation.

Real-time image annotation is gaining importance in applications where immediate feedback is required. Industries such as autonomous driving, surveillance, and robotics rely on systems that annotate and process visual data instantly. Real-time annotation enables faster decision-making and adaptive learning. This trend pushes annotation tools toward higher performance and lower latency. It also demands stronger automation and quality control mechanisms.

With the rise of LiDAR, depth sensors, and 3D cameras, annotating 3D data is becoming increasingly critical. 3D annotation provides spatial context that 2D images cannot capture. It is essential for autonomous vehicles, robotics, and digital twin applications. Annotating 3D point clouds and volumetric data requires specialized tools and expertise. This trend reflects the shift toward more immersive and accurate AI perception systems.

Data augmentation is evolving beyond basic transformations like rotation or scaling. Advanced augmentation techniques simulate real-world variations to improve model robustness. When combined with high-quality image annotation, augmented data helps models generalize better across environments. Enhanced augmentation reduces dependency on massive raw datasets. This trend improves training efficiency while maintaining performance.

In the realm of artificial intelligence and machine learning, image annotation plays a central and indispensable role. It is a strategic foundation that directly determines how well computer vision systems perform in real-world conditions. From selecting the right annotation techniques to ensuring data quality, regulatory compliance, and ethical integrity, every decision made during image annotation shapes the reliability, fairness, and scalability of AI models. As industries increasingly depend on AI-driven insights, the role of precise and thoughtful image labeling becomes even more critical.

Looking ahead, the future of image annotation will be defined by intelligent automation, real-time processing, and expansion into 3D and multimodal data. AI-assisted annotation and enhanced data augmentation will reduce manual effort, but human expertise will remain essential for judgment, context, and ethical oversight. Ultimately, success in computer vision will depend not on the volume of data collected, but on the quality, responsibility, and foresight applied in how that data is annotated.

FAQ

Image labeling is often used as a general term, while image annotation refers to the full process of adding structured, machine-readable information to images. Labeling is usually one part of annotation.

Accuracy requirements depend on the use case. Safety-critical applications like healthcare or autonomous systems demand extremely high precision, while exploratory models may tolerate lower accuracy initially.

AI can assist annotation, but full automation is rare. Human oversight is still necessary to handle edge cases, correct model bias, and ensure contextual accuracy.

Quality is measured using metrics such as inter-annotator agreement, precision checks, audit sampling, and downstream model performance.

No. Annotation is iterative. As models evolve and new data is introduced, annotations often need refinement to maintain performance.